At a Glance

Distinguishing between true AI practitioners and overnight "gurus" is a critical business skill in 2026. A fake AI expert often masks a lack of technical depth with vague, human-centric narratives and factual errors—such as inaccurately defining Large Language Models (LLMs) as simple databases or failing to explain the creative core of Generative AI. To vet expertise effectively, businesses must look past impressive resumes and prioritize transparency of methods, technical accuracy, and the ability to define clear ethical guardrails within a formal AI policy.

An AI Podcast with Impressive Credentials — and Early Warning Signs

Last year, in a weekly newsletter to its small business customers, my insurance company featured a podcast titled What Small Business Owners Need to Know About AI. That immediately raised a question: why would anyone look to an insurance company for AI education? While customers may be interested in AI-related insurance products, learning about AI itself would typically come from a professional course or credible practitioner. Still, I assumed it was a good-faith effort — and a potential teachable moment.

AI — short for artificial intelligence — has been a dominant topic on corporate agendas since ChatGPT’s launch in November 2022. Many organizations are eager to appear “AI-aware,” with AI training and usage now factoring into performance reviews and incentives. Not wanting to be left behind, my insurance company appeared to have joined the corporate AI bandwagon as well. Viewed this way, the podcast felt less like an effort to educate customers and more like a signal of internal AI awareness — an attempt by the content or marketing team to demonstrate relevance rather than deliver substantive guidance.

To produce the podcast, the company engaged an external “expert.” On paper, the credentials were impressive: multiple management books, columns in well-known publications, appearances on high-profile business shows, speaking engagements, and a software consulting business. Yet within the first 30 seconds of the podcast, it became clear that an impressive résumé does not necessarily translate into real AI expertise.

Podcast Structure

The AI podcast was broadly categorized into three sections:

📌 Section I - Definition of Key AI terms

📌 Section II - Description of an AI Policy

📌 Section III - Guidance regarding AI Software

Each of these sections is reviewed below with verbatim extracts from the insurance company expert's AI podcast and relevant information from ChatGPT and Gemini as needed. Closing thoughts follow the section reviews.

🔔[Explore more posts in the Avantiqa 360 Business & Leadership series, or browse other insightful blogs across travel, food, business, and lifestyle at Avantiqa 360.]

📌Section I - Definition of Key AI Terms

A) Definition of Artificial Intelligence (AI):

📍Expert’s definition of AI - from Podcast 📱

|

| Expert's definition of AI (from the expert's podcast) |

📍📍Observation: The expert’s definition of AI is strikingly odd. It is unusual enough to distract from its purpose. The heavy use of human-like descriptions makes it sound less like a technical concept and more like a cautionary narrative, which does little to help listeners understand what AI actually is and how it works in practice.

📍ChatGPT’s definition of AI - Prompt / Response 📱

|

| Prompt to ChatGPT for AI definition |

|

| Response from ChatGPT - AI definition |

B) Definition of Generative AI:

📍Expert’s definition of Generative AI - from Podcast📱

|

| Insurance company expert's definition of Generative AI |

|

| Insurance company expert's definition of Gen AI |

📍📍Observation: We’ve surely heard and used ChatGPT a lot by now, but we haven’t quite heard Generative AI defined in the manner the expert did. So, let’s check out ChatGPT’s definition of Generative AI.

📍ChatGPT’s definition of Generative AI - Response📱

|

| ChatGPT's definition of Generative AI |

📍📍Observation: The expert’s explanation was confusing but ChatGPT's response was clear. Generative AI literally means AI that generates new content — including text, images, video, and audio — which was absent from the expert’s description. At a business-relevant level, generative AI can be used to, among other things, draft written content, generate marketing visuals and audio, prototype customer service responses, explore data patterns rapidly.

C) Definition of Large Language Model (LLM):

📍Expert’s definition of Large Language Model (LLM) - from Podcast📱

|

| LLM defined by Insurance company AI expert |

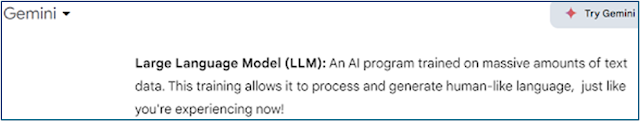

📍📍Observation: The Insurance company AI 'expert' is way off-based on this one. Fact: An LLM is not a database. LLMs — short for large language models — are neural networks trained on text data to predict and generate language, not simply store it.

📍Definition of Large Language Model (LLM) - from ChatGPT & Gemini📱

| ||

LLM definition - ChatGPT

|

|

| LLM is not a dataset - ChatGPT |

📍📍Observation: The AI expert could've easily consulted ChatGPT and Gemini for an accurate LLM definition instead of fabricating facts. To reiterate, LLM is not a database. Large amounts of data is used to train an LLM.

📌Section II - Description of an AI Policy

After offering inaccurate definitions of key AI terms, the expert went on to advise small business owners to “have an AI policy in your business.” At face value, that sounded reasonable. I continued listening, expecting more practical or meaningful guidance.

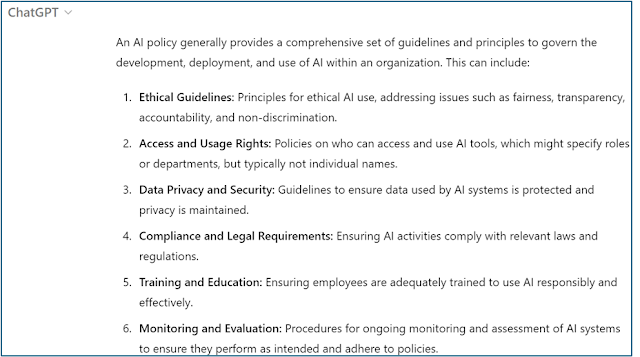

📍📍Observation: The expert’s recommendation was limited to asking business owners to identify areas where AI might be needed and to specify which employees could or could not use AI. That was the extent of the guidance. No explanation was provided on what an AI policy should actually cover, how it differs from existing policies, or how it should be implemented in practice.

Here’s the issue with that guidance. Deciding which employees can access which tools is not the core purpose of an AI policy. Individual user access — whether for AI or non-AI applications — is typically managed through IT security and access-control processes, not policy documents. An AI policy should not function as a permissions list. A small business may choose to combine their policy and access documentation or not have a separate IT security team to handle user security but nonetheless it is important to make this distinction because it is a general best practice.

At a minimum, an AI policy is meant to define principles and guardrails, such as how AI tools may be used, what types of data can or cannot be shared, and what risks or limitations employees should be aware of. By reducing an AI policy to access decisions alone, the expert overlooked its actual purpose — providing clarity, accountability, and risk awareness for AI use within a business.

A policy is generally a higher-level document that provides a set of guidelines and best practices. An AI policy would therefore provide guidelines and framework for responsible AI use by the company and its employees. It could address ethical guidelines in AI, company policy around access and usage rights, data privacy, compliance, training, monitoring etc. The policy may identify specific types of AI applications requiring human oversight. A good AI policy should include:

-

Clear definitions of responsible AI use

-

Ethical guidelines

-

Data privacy and security practices

-

Training requirements for employees

-

Monitoring and compliance mechanisms

This means clarifying what AI can and cannot do — and what safeguards employees should follow if AI tools are deployed

Since the expert suggested checking ChatGPT for a free AI

policy, here’s an extract of the AI policy guideline from ChatGPT.

📍ChatGPT's description of an AI Policy📱

|

| AI Policy description - ChatGPT |

📌Section III - Guidance regarding AI software

Thankfully, the expert reached the final section i.e. the AI Software section, and the rationale behind this podcast started making sense.

|

| Insurance company AI 'expert's' guidance on using AI software |

This was the only section where the expert provided genuinely useful business advice — practical guidance on choosing software tools.

However, it also suggested that the real goal of the AI 'expert' was not education — it was to position the expert’s own consulting services.

Helping Customers or Networking?

Remember, I’d mentioned earlier that the expert also ran a software consulting company? In that context, the podcast felt less like an educational effort for small business owners and more like a soft sales pitch disguised as AI education. It gave the expert direct access to over a million users, influencers, and decision makers, potentially opening up new consulting opportunities.

The insurance company also stood to benefit. As a provider of business-related policies, the repeated references to “policy” throughout the podcast could subtly encourage customers to review their existing coverage — and possibly upgrade or purchase new products.

From that perspective, it appears to be a win-win arrangement for the expert and the insurance company — though not necessarily for the audience.

What did the Small Business Owners gain from the AI podcast?

At best, the AI podcast managed to deliver the following:

Confusion — driven by inaccurate, half-baked definitions and poorly explained AI concepts.

-

Disappointment — the content suggested a surprisingly low bar for the audience’s ability to understand AI, raising questions about whether the podcast was adequately reviewed or even taken seriously before being released.

-

Entertainment (unintended) — if the Insurance company's goal was to educate the small business owners, then it missed the mark. The irony is that both the insurance company’s team and the expert would likely benefit from foundational AI training themselves before attempting to teach others.

-

Surprise — particularly at the expert’s claims of extensive writing and speaking on AI. One can’t help but wonder how such credentials go largely unquestioned across media, publishing, and conference circuits when even basic AI fundamentals appear shaky.

✍Final Thoughts

The "What Small Business Owners Need to Know About AI" podcast provided little to no practical value for small business owners

Meanwhile, the expert continues to produce podcasts for the insurance company on AI and other topics, presumably at a healthy fee. I haven’t listened to the subsequent episodes — and don’t plan to. Based on this experience, these podcasts risk becoming a drain on both budget and credibility if basic fact-checking and quality control are absent.

One would hope that someone within the organization eventually questions whether this approach truly serves customers — and either raises the bar or rethinks the strategy altogether.

Until next time, folks. Stay sharp, stay curious 🎯🌍✨

Comments

Post a Comment